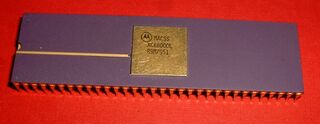

Motorola 68000

From Sega Retro

| This article needs cleanup. This article needs to be edited to conform to a higher standard of article quality. After the article has been cleaned up, you may remove this message. For help, see the How to Edit a Page article. |

|

| Motorola 68000 |

|---|

| Designer: Motorola |

The Motorola 68000 is a CISC (Complex instruction set computer) microprocessor, the first member of a successful family of microprocessors from Motorola, which were all mostly software compatible. The entire series was often referred to as the m68k, or simply 68k.

A version manufactured by Hitachi, the FD1094, was used in various Sega arcade systems.

Contents

History

Originally, the MC68000 was designed for use in household products (at least, that is what an internal memo from Motorola claimed). It was used for the design of computers like the Apple Macintosh, Amiga and Atari and the original Sun Microsystems UNIX machines as well as the Apollo/Domain workstations. It was also used in the Sega Mega Drive, Neo Geo and several Arcade Machines as their main CPU. In the Sega Saturn, the 68000 was used as the sound processor, and in the Atari Jaguar they were used as a main controller for all the other dedicated hardware IC's. In the Silicon Graphics' IRIS 1000 and 1200 terminals, the 68000 was also used.

68000 derivatives persisted in the UNIX market for many years, because the architecture so strongly resembles the Digital PDP-11 and VAX, and is an excellent computer for running C code. The 68000 eventually saw its greatest success as a controller. Thousands of HP, Printronix and Adobe printers used it. Its derivative microcontrollers, the CPU32 and Coldfire processors have been manufactured in the millions as automotive engine controllers. It also sees use by medical manufacturers and many printer manufacturers because of its low cost, convenience, and good stability. As of 2001, the Dragonball versions of the processor are used in the popular Palm series of PDAs from Palm Computing and Handspring's Visor, though the architecture is being phased out in favor of the ARM processor core. The Motorola 68000 is also used in Texas Instruments' latest line of graphing calculators.

Initial samples of the 68000 were released in 1979, and competed against the Intel 8086 and Intel 80286 with some success. By 1982 it was clocked at a then blisteringly-fast 8MHz, with the simplest instructions taking four clocks but the most complex ones requiring many more. However, the instructions did more than Intel processors. Motorola ceased production of the 68000 in 2000, although derivatives, notably the CPU32 family, continue in production. As of 2001, Hitachi continued to manufacture the 68000 under license.

Architecture

Address bus

The 68000 was a clever compromise. When the 68000 was introduced, 16-bit buses were really the most practical size. However, the 68000 was designed with 32-bit registers and address spaces, on the assumption that hardware prices would fall.

It is important to note that even though the 68000 had a 16bit ALU, addresses were always manipulated by 32bit instructions. I.e. not only was the address space 32bit, but flat addressing was used. Contrast this to the 8086, which had 20bit address space, but could only access 16bit (64 kilobyte) chunks without resorting to slow extra instructions. The clever 68000 compromise was that in spite of databus and ALU width being 16bit, address arithmetic always is 32bit (further, even for all dataregister ops there is a 32bit version of the instruction). For the complex addressing mode, there is a fullsize address adder outside the ALU. E.g. a full 32bit address register postincrement goes without speed penalty.

So even though starting out as "16bit" cpu, the 68000 instruction set describes a 32bit architecture. The importance of architecture cannot be emphasized enough. Throughout history, addressing pains have not been hardware implementation problems, but always architecture problems (instruction set problems, i.e. software compatibility problems). The successor 68020 with 32bit ALU and 32bit databus runs unchanged 68000 software at "32bit speed", manipulating data up to 4 gigabyte, far beyond what software of other "16bit" cpus (e.g. 8086) could do.

To address the perceived markets, the actual 68000 was designed in three forms. The base-form had a 24-bit address, and a 16-bit data bus. The short form, the 68008, had an 18-bit address (possibly 19 or 20 bits, at least one firm addressed 512KBytes with 68008s), and an 8-bit data bus. A planned future form (later the 68020) had a 32-bit data and address bus.

Internal registers

The CPU had 8 general-purpose data registers (D0-D7), and 8 address registers (A0-A7). The last address register was also the standard stack pointer, and could be called either A7 or SP. This was a good number of registers in many ways. It was small enough to make the 68000 respond quickly to interrupts (because only 15 or 16 had to be saved), and yet large enough to make most calculations fast.

Having two types of registers was mildly annoying at times, but really not hard to use in practice. Reportedly, it allowed the CPU designers to achieve a higher degree of parallelism, by using an auxiliary execution unit for the address registers.

Status register

The status register is a specialized register which records conditions set by certain operations as well as user conditions such as supervisor state and interrupt abilities. The upper byte of the sr is the system byte, or the system control area. The first bit is the trace mode for trace exceptions. The next bit is unimportant, which will be further called a "don't care" bit. The bit after that is the supervisor bit, which should always be set when programming for the Mega Drive, but has variable effects on M68000 based computers. The next two bits are "don't care" bits, then there is a 3-bit interrupt masking area. This area is set to the highest level interrupt not allowed to occur (e.g. %010 allows any interrupt higher than level-2). The next three bits are "don't care" bits. The next five bits are the condition bits, or the ccr in most manuals. The ccr consists of the lower five bits of the sr and are as follows: e"X"tend, "N"egative, "Z"ero, o"V"erflow, and "C"arry. The negative bit is set whenever a signed operation yields a negative result. The zero bit is set whenever any operation yields a null, or zero, result. The overflow bit is set whenever an operation causes a byte, word, or long to reach its maximum value and has to wrap around back to zero. The carry bit is set whenever an arithmetic operation produces a carry, or extraneous data that cannot be used in the current operation. In most if not all cases, the extend bit is set the same as the carry bit. The purpose of this ccr is to aid in conditional operations such as the Bcc, DBcc, and Scc operations.

The instruction set

The designers attempted to make the assembly language orthogonal. That is, instructions were divided into operations and address modes. Almost all address modes were available for almost all instructions. Many programmers disliked the "near" orthogonality, while others were grateful for the attempt.

At the bit level, the person writing the assembler would clearly see that these "instructions" could become any of several different op-codes. It was quite a good compromise because it gave almost the same convenience as a truly orthogonal machine, and yet also gave the CPU designers freedom to fill in the op-code table.

The minimal instruction size was huge for its day at 16 bits. Furthermore, many instructions and addressing modes added extra words on the back for addresses, more address-mode bits, etc.

Many designers believed that the M68000 architecture had compact code for its cost, especially when produced by compilers. This belief in more compact code led to many of its design wins, and much of its longevity as an architecture.

Most embedded system designers are acutely aware of the costs of memory.

This belief (or feature, depending on the designer) continued to make design wins for the instruction set (with updated CPUs) up until the ARM architecture introduced compressed Thumb op-codes that were more compact.

Privilege levels

The CPU, and later the whole family, implemented exactly two levels of privilege. User mode gave access to everything except the interrupt level control. Supervisor privilege gave access to everything. An interrupt always became supervisory. The supervisor bit was stored in the status register, and visible to user programs.

A real advantage of this system was that the supervisor level had a separate stack pointer. This permitted a multitasking system to use very small stacks for tasks, because the designers did not have to allocate the memory required to hold the stack frames of a maximum stack-up of interrupts.

Interrupts

The CPU recognized 8 interrupt levels. Levels 0 through 7 were strictly prioritized. That is, a higher-numbered interrupt could always interrupt a lower-numbered interrupt. In the status register, a privileged instruction allowed one to set the current minimum interrupt level, blocking lower priority interrupts. Level 7 was not maskable. Level 0 could be interrupted by any higher level. The level was stored in the status register, and was visible to user-level programs.

The "exception table" (interrupt vector addresses) was fixed at addresses 0 through 1023, permitting 256 32-bit vectors. The first vector was the starting stack address, and the second was the starting code address. Vectors 3..15 were used to report various errors: bus error, address error, illegal instruction, zero division, CH1 & CHK2 vector, privilege violation, and some reserved vectors that became line 1010 emulator, line 1111 emulator, and hardware breakpoint. Vector 24 started the real interrupts: spurious interrupt (i.e. it wasn't properly hand-shaken), and level 1 .. level 7 autovectors, then the 15 TRAP vectors, then some more reserved vectors, then the user defined vectors.

The interrupt controller was originally a separate IC, the 68901. This chip performed a rather complicated dance on the bus to translate the seven pull-down interrupt lines into vectored interrupts (level 1 through 7). The 68901 also provided a basic UART and (if memory serves) a periodic interrupt timer. The Motorola 68901 had a number of severe defects, including the ability to lose the highest-priority interrupt if it and the clock interrupt happened within some window of each other. The Mostek part was superior.

One wants a ROM bootstrap, but then once the OS is up, one wants installable vectors.

A lot of effort went into circumventing the fixed vectors, either through trampolines (tables addressed to RAM) in ROM or through bank-switchable memory.

Another problem was the "privilege" logic. The designers had clearly planned on having a minimal, but adequate, two-tiered security system to support something like a UNIX kernel. This would limit the ability of user programs to access hardware and interrupts.

The 68000 was also unable to correctly return from an exception on a failing memory access, a crucial feature to enable true virtual memory. To simulate unlimited RAM, one wants to interrupt when a memory access fails, and then the interrupt routine will allocate a block of real RAM and read a piece of data on disk into it. Several companies did succeed in making 68000 based Unix workstations with virtual memory that worked, by using two 68000 chips running in parallel on different phased clocks. When the "leading" 68000 encountered a bad memory access, extra hardware would interrupt the "main" 68000 to prevent it from also encountering the bad memory access. This interrupt routine would handle the virtual memory functions and restart the "leading" 68000 in the correct state to continue properly synchronized operation when the "main" 68000 returned from the interrupt. Obviously this is an expensive, tricky, and very inconvenient technique, and they upgraded to the 68010 as quickly as possible.

The 68000 did not meet the Popek and Goldberg virualization requirements because it had a single unprivileged instruction "MOVE from SR", which allowed user-mode software read-only access to a small amount of privileged state.

These problems were fixed in the next major revision of the 68K architecture, with the release of the MC68010. The Bus Error and Address Error instructions pushed a large amount of internal state onto the supervisor stack in order to facility recovery, and the MOVE from SR instruction was made privileged. A new unprivileged "MOVE from CCR" instruction was provided for use in its place by user mode software; an operating system could trap and emulate user-mode MOVE from SR instructions if desired.

Documentation

External links

- Descriptions of assembler instructions

- 68000 images and descriptions at cpu-collection.de

- Motorola 68000 Programmers Manual